livekit/livekit-wakeword

livekit-wakeword

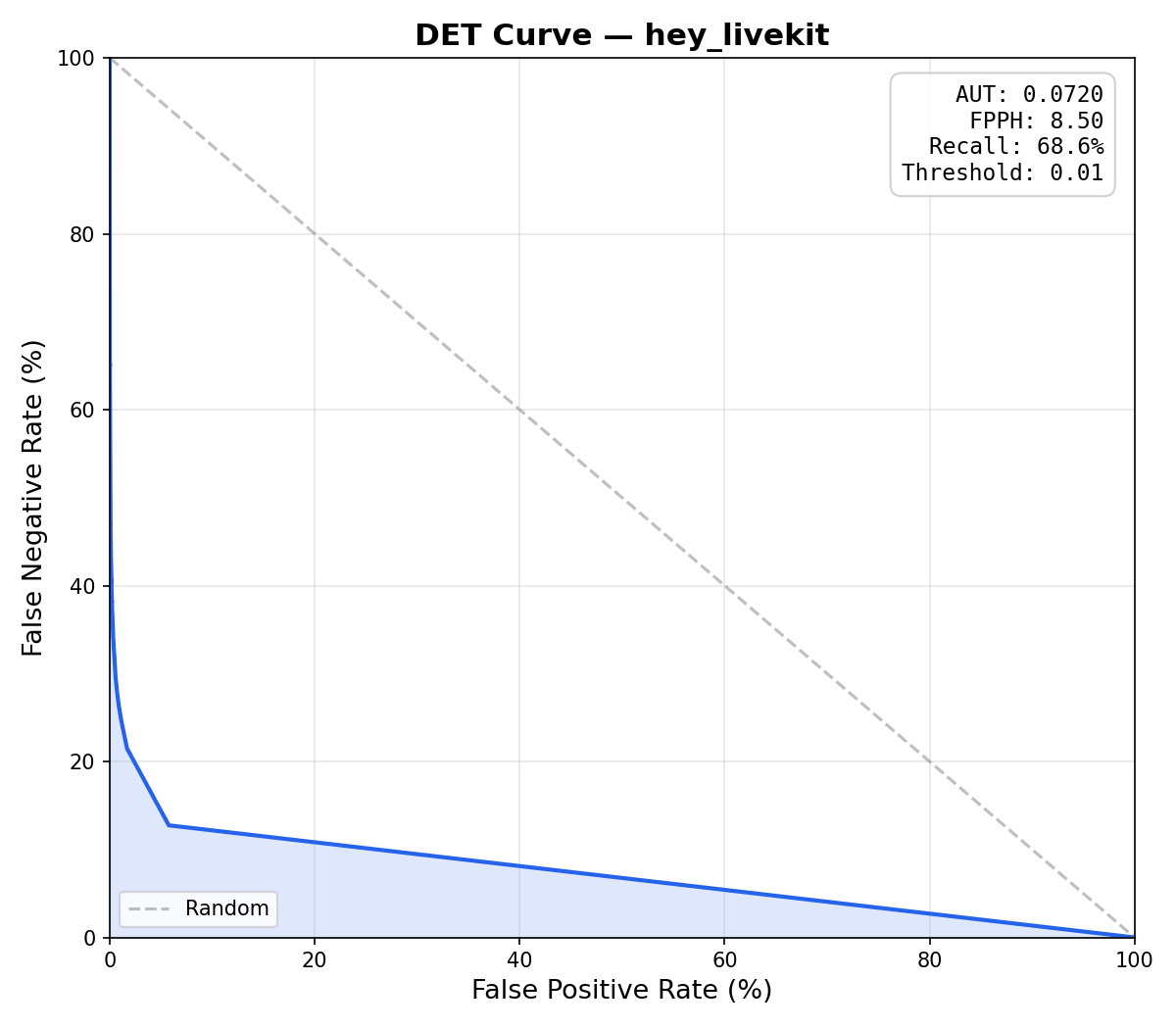

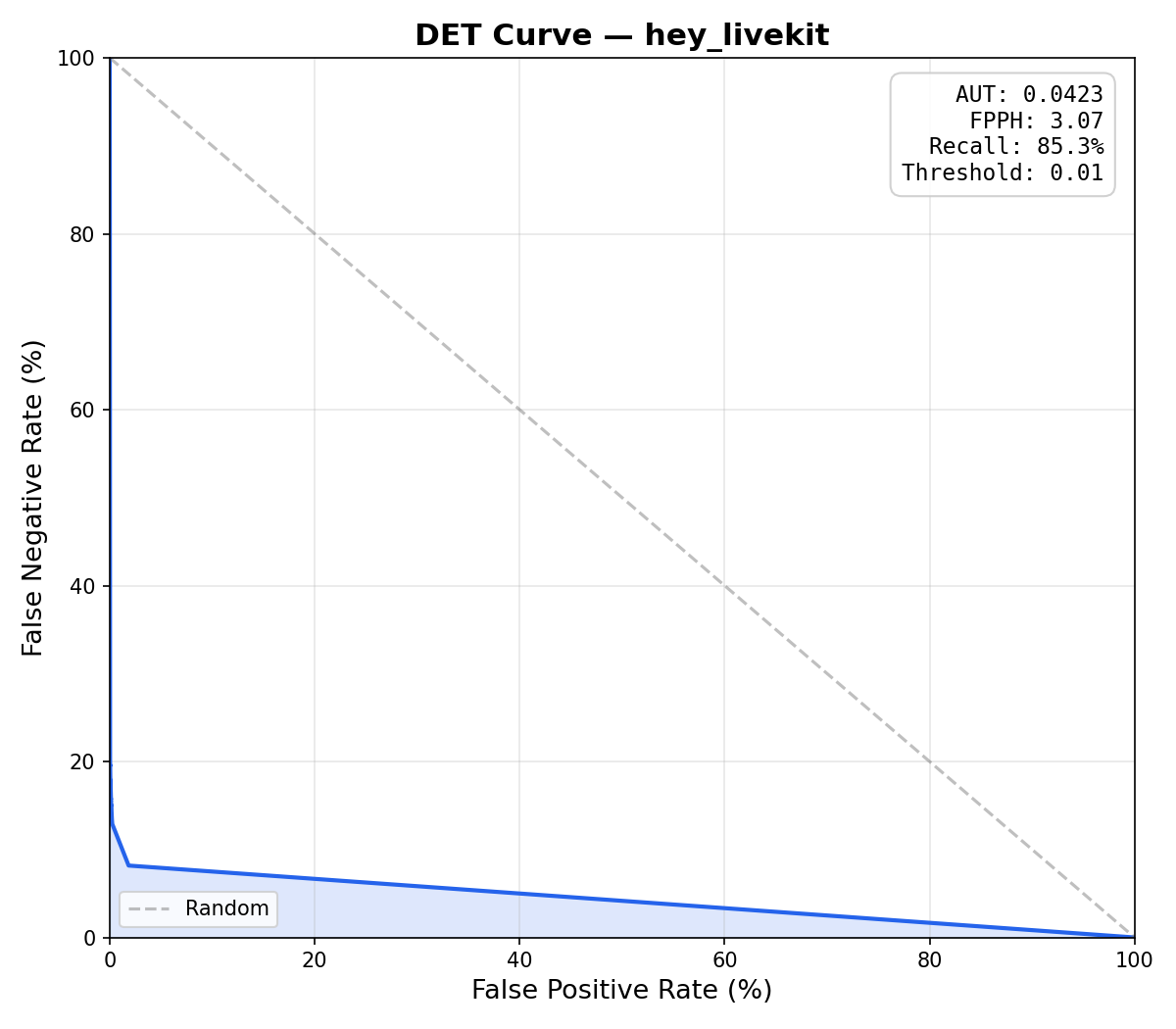

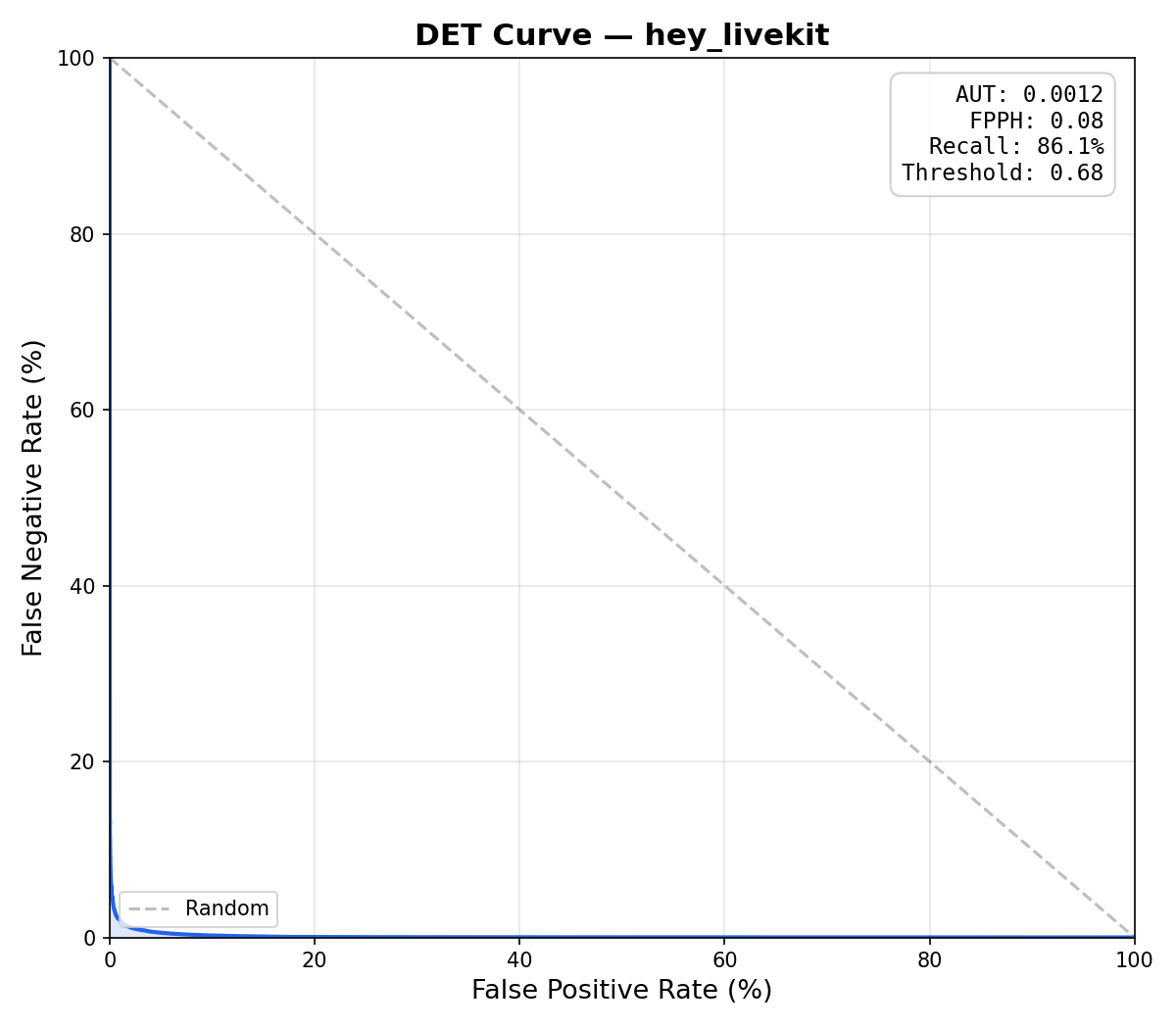

livekit-wakeword is an open-source wake word detection library published by LiveKit. It ships as a Python package (livekit-wakeword on PyPI), a Rust crate (livekit-wakeword on crates.io), and a Swift package targeting iOS 16+ and macOS 14+. The library is built on top of openWakeWord's audio front-end — a frozen mel-spectrogram ONNX model followed by Google's 96-dimensional speech embedding CNN — but replaces the flat DNN classification head with a Conv-Attention head that uses 1D temporal convolutions and multi-head self-attention across 16 timesteps of speech embeddings. On the "hey livekit" benchmark (15,000 positive clips, 25 hours of audio), the conv-attention head achieves 0.08 false positives per hour and 86.1% recall, compared to 8.50 FPPH / 68.6% recall for vanilla openWakeWord — a 100× reduction in false triggers.

Showcase

Features

- Conv-Attention classifier — 1D Conv blocks + MultiheadAttention + MeanPool head that models temporal ordering of phoneme embeddings; 60× lower AUT than openWakeWord.

- Backward compatible with openWakeWord models and the openWakeWord library interface.

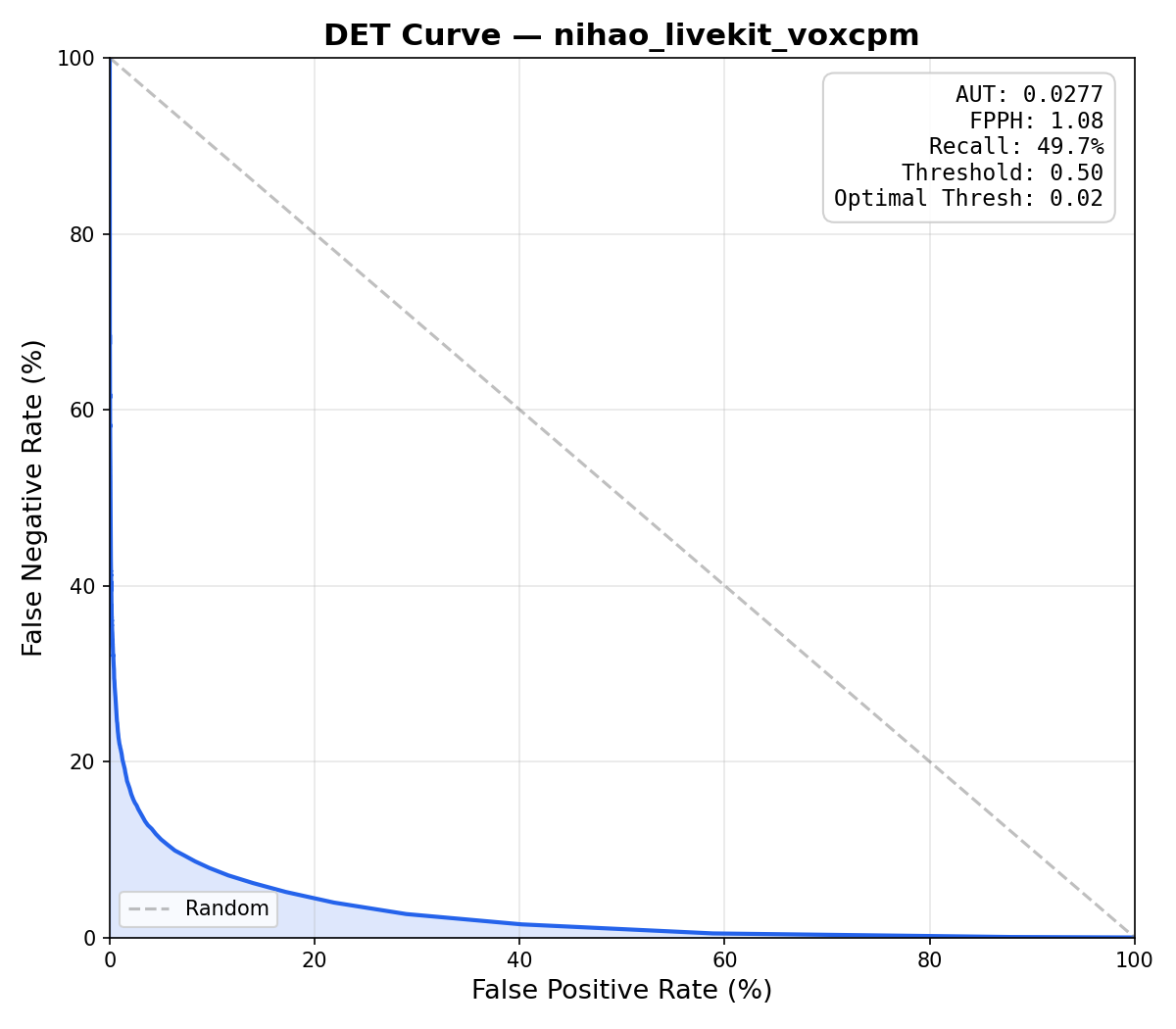

- Multilingual training — 30 languages plus 9 Chinese dialects via VoxCPM2 synthetic TTS; English uses Piper VITS with SLERP speaker blending.

- Three classifier architectures — DNN (flat FC), RNN (bi-LSTM), Conv+Attention (default); four size presets (tiny / small / medium / large).

- Single-YAML pipeline — one config file drives data generation, augmentation, feature extraction, 3-phase training, ONNX export, and DET-curve evaluation.

- Synthetic training data — adversarial negatives via CMU-dict phoneme substitution; no real audio recordings required.

- 3-phase adaptive training — focal loss + embedding mixup + checkpoint averaging to minimize FPPH while maximizing recall.

- INT8 quantization — optional ONNX INT8 quantization via

--quantizeexport flag. - Python inference API —

WakeWordModel(stateless predict) +WakeWordListener(async microphone capture with debouncing). - Rust crate — mel and embedding models compiled into binary; only classifier ONNX loaded at runtime; automatic resampling from 22050–384000 Hz.

- Swift package — iOS 16+ / macOS 14+; ONNX Runtime with CoreML Execution Provider dispatches to ANE/GPU/CPU;

AVAudioConverterhandles mic resampling. - Cloud GPU training — SkyPilot integration dispatches training jobs to cloud providers (Nebius example included).

- DET curve evaluation — AUT, FPPH, and recall metrics; evaluates any compatible ONNX model including openWakeWord models.

- uv-native — zero dependency-conflict setup via

uv sync --all-extras.

Live examples

- iOS/macOS SwiftUI demo — runnable SwiftUI app for iOS + macOS using WakeWordListener with microphone capture.

- Wake Word–Triggered Agent — full LiveKit agent that starts a voice session on wake word detection.

Documentation

- Architecture Overview

- Data Generation

- Augmentation

- Feature Extraction

- Training

- Export & Inference

- Evaluation

Quick start

# Install (inference only)

pip install livekit-wakeword[listener]

# Install (full training pipeline)

pip install livekit-wakeword[train,eval,export]

# Download models and training data

livekit-wakeword setup --config configs/prod.yaml

# Train a custom wake word end-to-end

livekit-wakeword run configs/prod.yaml

Tags

- Maturity

- BetaBetaActively developed but pre-1.0 — no stable release tagged yet.View all repositories tagged→

- Persona

- Backend EngineerBackend EngineerEngineers building server-side services, APIs, and infrastructure components.View all repositories tagged→AI Platform EngineerAI Platform EngineerEngineers building infrastructure and tooling for AI agents and LLM-powered applications.View all repositories tagged→

- License Category

- PermissivePermissivePermissive open-source license (MIT, Apache 2.0, BSD) allowing use without copyleft obligations.View all repositories tagged→

- Built With

- ONNX RuntimeONNX RuntimeCross-platform inference engine for running exported wake word classifier ONNX models.View all repositories tagged→RustRustSystems programming language used for CubeAPI, CubeHypervisor, and CubeShim components.View all repositories tagged→PyTorchPyTorchMachine learning framework used for classifier training and checkpoint management.View all repositories tagged→PydanticPydanticUsed for validated YAML configuration models across the training pipeline.View all repositories tagged→

- Security Posture

- UnratedUnratedOSSF Scorecard has not yet scanned this repository — security posture is unknown.View all repositories tagged→

- Maintainer Model

- Single-personSingle-personMaintained primarily by one developer — top contributor accounts for most commits.View all repositories tagged→

- Form Factor

- LibraryLibraryImportable package or module that adds capabilities to an existing application.View all repositories tagged→

- Platform

- MobileMobileRuns on iOS and Android mobile devices.View all repositories tagged→CLICLICommand-line interface as the primary interaction surface.View all repositories tagged→ServerServerRuns on server or bare-metal hardware, not a hosted cloud service.View all repositories tagged→DesktopDesktopRuns as a native desktop application on macOS and Linux.View all repositories tagged→

- Use case

- Drop-in replacement for openWakeWordDrop-in replacement for openWakeWordWhen my current openWakeWord deployment has too many false triggers, I want to swap in livekit-wakeword using the same ONNX model format and inference API, so I can reduce FPPH without rewriting my pipeline.View all repositories tagged→Train wake words in 30+ languagesTrain wake words in 30+ languagesWhen I am building a voice assistant for a non-English market, I want to train a wake word model in Chinese, Japanese, or Arabic using VoxCPM2 synthetic speech, so I can ship localized voice activation without building a multilingual dataset from scratch.View all repositories tagged→Wake word detection in iOS / macOS appsWake word detection in iOS / macOS appsWhen I am building a hands-free iOS or macOS app, I want a Swift wake word library that dispatches inference to the Neural Engine via CoreML, so I can activate features by voice without battery impact or network latency.View all repositories tagged→On-device wake word with low false-positive rateOn-device wake word with low false-positive rateWhen my wake word model triggers too often on background speech, I want a conv-attention classifier that models temporal phoneme ordering, so I can achieve fewer than 0.1 false positives per hour without sacrificing recall.View all repositories tagged→Train a custom wake word from scratchTrain a custom wake word from scratchWhen I want to add a custom voice trigger to my application, I want to train a wake word model from a text phrase using synthetic TTS data, so I can ship a production-quality hotword detector without recording real audio.View all repositories tagged→Run wake word training on cloud GPUsRun wake word training on cloud GPUsWhen local GPU resources are insufficient for large-scale wake word training, I want to launch a SkyPilot job that runs the full pipeline on a cloud instance, so I can train production-scale models without owning a GPU server.View all repositories tagged→Trigger a LiveKit voice agent on wake wordTrigger a LiveKit voice agent on wake wordWhen a user is in the same room as a LiveKit-connected device, I want the AI agent to wake and join the room automatically when it hears a predefined phrase, so I can build a hands-free voice assistant experience.View all repositories tagged→

- Ecosystem

- PythonPythonBuilt with the Python language.View all repositories tagged→

- Status

- ActiveActiveReceives recent commits and releases, issues are being addressed.View all repositories tagged→

- Features

- Checkpoint averaging for robust final modelCheckpoint averaging for robust final modelAverage weights of top-performing checkpoints to produce a smoother, more generalizable final model.View all repositories tagged→Async microphone listener with debouncingAsync microphone listener with debouncingCapture live microphone audio and emit detection events asynchronously with configurable debounce.View all repositories tagged→DET curve evaluation (AUT, FPPH, recall)DET curve evaluation (AUT, FPPH, recall)Evaluate ONNX wake word models with DET curves, AUT, and false-positives-per-hour metrics.View all repositories tagged→Wake word detectionWake word detectionDetect a specific spoken phrase in a continuous audio stream and return a confidence score.View all repositories tagged→ONNX export with optional INT8 quantizationONNX export with optional INT8 quantizationExport trained PyTorch classifier to ONNX for cross-platform deployment; supports INT8 dynamic quantization.View all repositories tagged→Voice pipelineVoice pipelineSTT/TTS pipeline for real-time voice interaction on macOS, iOS, Android, and Discord voice channels.View all repositories tagged→Multilingual TTS synthesis (30+ languages)Multilingual TTS synthesis (30+ languages)Generate wake word training data in 30 languages plus Chinese dialects via VoxCPM2 voice design.View all repositories tagged→Synthetic training data generationSynthetic training data generationGenerate positive and adversarial-negative training clips via TTS without recording real audio.View all repositories tagged→

- License

- Apache 2.0Apache 2.0Apache License 2.0 — permissive license with patent grant and attribution requirement.View all repositories tagged→

Documentation

8 pages indexed · 1,169 words▶READMElivekit-wakeword — Wake Word Librarygithub.com/livekit/livekit-wakeword/blob/main/README.md↗

livekit-wakeword

An open-source wake word library for creating voice-enabled applications. Based on openWakeWord with streamlined training: generate synthetic data, augment, train, and export from a single YAML config.

Features:

- Conv-Attention classifier: 1D temporal convolutions + multi-head self-attention replace openWakeWord's flat DNN head, delivering 60x lower AUT and 100x fewer false positives per hour than openWakeWord

- Backward compatible with openWakeWord models and library

- Multilingual support: over 30 languages via VoxCPM synthetic data generation

- Train anywhere: local machine, cloud, or spawn SkyPilot jobs

- Ships as Python package (pip/uv), Rust crate (crates.io), and Swift package (iOS 16+ / macOS 14+)

- Zero dependency headaches: uv handles everything

Benchmarks on "hey livekit" (15,000 positive clips, 45,084 negative clips, 25 hours of audio):

Metric openWakeWord (DNN) livekit-wakeword (conv-attention) AUT 0.0720 0.0012 FPPH 8.50 0.08 Recall 68.6% 86.1% License: Apache 2.0. Development Status: Beta (v0.2).

▶Architecture Overviewgithub.com/livekit/livekit-wakeword/blob/main/docs/overview.md↗

Architecture Overview

livekit-wakeword uses a hybrid ONNX + PyTorch architecture. Two frozen ONNX models handle feature extraction (mel spectrogram and speech embeddings), while a lightweight PyTorch classifier head is trained per wake word.

Training Pipeline: Synthetic speech generated via VITS TTS with SLERP speaker blending → augmented with noise/reverb → frozen ONNX feature extractors → lightweight classifier head → exported to ONNX.

Inference Pipeline: Raw 16kHz audio → frozen ONNX feature extractors (mel spectrogram → 96-dim speech embedding) → trained classifier (ONNX) → detection score 0–1.

Why ONNX + PyTorch?

- Fast numpy-based inference without loading PyTorch at detection time

- Shared feature extractors across all wake words

- Minimal training data needed since only the small classifier head is trained

Three classifier architectures: DNN (flat), RNN (bi-LSTM), Conv+Attention (default). The conv-attention head uses 1D convolutions + multi-head self-attention over 16 timesteps of speech embeddings — best at distinguishing wake words from phonetically similar phrases.

Module map: config.py, cli.py, models/ (feature_extractor, classifier, pipeline), data/ (generate, augment, dataset, features), training/ (trainer, metrics), eval/ (evaluate), export/ (onnx), inference/ (model, listener).

▶Export & Inference APIgithub.com/livekit/livekit-wakeword/blob/main/docs/export-and-inference.md↗

Export & Inference

The export stage converts the trained PyTorch classifier to ONNX for deployment. The inference API provides WakeWordModel for prediction and WakeWordListener for async microphone detection.

WakeWordModel

Stateless prediction API. Pass ~2 seconds of 16kHz audio, receive confidence scores per wake word.

from livekit.wakeword import WakeWordModel model = WakeWordModel(models=["hey_livekit.onnx"]) scores = model.predict(audio_chunk) if scores["hey_livekit"] > 0.5: print("Wake word detected!")WakeWordListener

Async microphone detection with debouncing. Uses PyAudio to capture from default microphone at 16kHz/mono/int16.

async with WakeWordListener(model, threshold=0.5, debounce=2.0) as listener: detection = await listener.wait_for_detection()ONNX Export + INT8 Quantization

export_classifier() exports the trained PyTorch classifier to ONNX opset 18. Optional INT8 dynamic quantization via --quantize flag.

Rust crate

livekit-wakeword = "0.1"Mel spectrogram and speech embedding models compiled into binary; only the classifier ONNX loaded at runtime. Automatic resampling from 22050–384000 Hz to 16 kHz.

Swift package (iOS 16+ / macOS 14+)

WakeWordModel + WakeWordListener APIs match Python interface. ONNX Runtime with CoreML Execution Provider dispatches to ANE/GPU/CPU. A runnable SwiftUI demo lives in examples/ios_wakeword/.

▶Training Pipelinegithub.com/livekit/livekit-wakeword/blob/main/docs/training.md↗

Training Pipeline

3-phase adaptive training with focal loss, embedding mixup, AdamW, and checkpoint averaging.

Phase 1 — Full Training: LR warmup → hold → cosine decay. Focal loss + negative weighting + embedding mixup. 50,000 steps default.

Phase 2 — Refinement: 0.1× LR, steps/10 steps. Adaptive negative weight doubling if FPPH > target.

Phase 3 — Fine-Tuning: 0.01× LR, steps/10 steps.

Checkpoint Averaging: Select top checkpoints by FPPH + recall + accuracy, average their weights for a smoother final model.

Loss Function: Focal loss (γ=2.0) down-weights well-classified examples — eliminates manual hard-example mining. Per-sample negative weighting up to 1500× by end of phase 1.

Regularization: Label smoothing (ε=0.05) + embedding mixup (Beta(0.2,0.2) interpolation in embedding space).

Three classifiers: DNN (flatten → FC layers), RNN (Bi-LSTM, 2 layers), Conv+Attention (Conv1D blocks → MultiheadAttention → MeanPool → Linear → Sigmoid). Size presets: tiny/small/medium/large.

YAML config minimum:

model_name: hey_robot target_phrases: ["hey robot"] n_samples: 10000 model: model_type: conv_attention model_size: small steps: 50000 target_fp_per_hour: 0.2▶Data Generation Pipelinegithub.com/livekit/livekit-wakeword/blob/main/docs/data-generation.md↗

Data Generation Pipeline

Synthesizes positive and negative audio clips using a pluggable TTS backend: Piper VITS with SLERP speaker blending (English default) or VoxCPM2 voice design (multilingual).

Multilingual wake words require tts_backend: voxcpm. 30 languages supported: Arabic, Burmese, Chinese, Danish, Dutch, English, Finnish, French, German, Greek, Hebrew, Hindi, Indonesian, Italian, Japanese, Khmer, Korean, Lao, Malay, Norwegian, Polish, Portuguese, Russian, Spanish, Swahili, Swedish, Tagalog, Thai, Turkish, Vietnamese — plus 9 Chinese dialects.

Piper VITS + SLERP: Speaker blending via Spherical Linear Interpolation over the speaker embedding space produces diverse voice variety from a single checkpoint.

Adversarial negative generation: Phoneme substitution via CMU dict generates phonetically similar phrases as hard negatives — training the model to reject near-miss sounds.

Default sample counts: 10,000 positive train / 2,000 positive test / 10,000 negative train / 2,000 negative test / 200 background train / 40 background test.

Cloud GPU training: SkyPilot integration —

sky launch skypilot/train.yamldispatches training to Nebius (or other cloud).▶Augmentation Pipelinegithub.com/livekit/livekit-wakeword/blob/main/docs/augmentation.md↗

Augmentation Pipeline

Applies realistic audio transformations to synthetic TTS clips via AudioAugmentor.

Per-sample augmentations: SevenBandParametricEQ (25% probability), TanhDistortion (25% probability) via audiomentations library.

RIR convolution: 50% probability — convolves with room impulse response via FFT to simulate acoustic environment.

Background mixing: Random SNR 5–15 dB. Background clips also trained as standalone negative class (pure ambient = not a wake word).

Clip alignment: Positive clips end-aligned with 200ms jitter (simulates real detection scenario). Negative clips center-padded.

Stacking rounds: Each round applies transforms to the previous round's output, producing progressively degraded audio for robust training.

▶Feature Extraction Pipelinegithub.com/livekit/livekit-wakeword/blob/main/docs/feature-extraction.md↗

Feature Extraction Pipeline

Converts augmented audio clips to fixed-size embedding arrays using two frozen ONNX models.

MelSpectrogramFrontend: 32 mel bands, 10ms hop, 60–3800 Hz range. Output: (batch, time_frames, 32).

SpeechEmbedding: Google speech_embedding CNN (~330k parameters). Sliding window (76 frames, stride 8 = 80ms). Output: (batch, n_windows, 96-dim).

Timestep selection: Last 16 windows from ~2s audio window, left-padded if shorter. Final shape: (batch, 16, 96).

Shared frozen ONNX feature extractors mean only the small classifier head (tiny/small/medium/large) needs training per wake word — same front-end as openWakeWord (backward compatible).

Memory-mapped .npy batch generator for efficient dataset loading without loading full arrays into RAM.

▶Evaluation — DET curves, AUT, FPPHgithub.com/livekit/livekit-wakeword/blob/main/docs/evaluation.md↗

Evaluation

The evaluation stage runs the exported ONNX model against held-out validation data and produces a DET (Detection Error Tradeoff) curve, AUT score, and summary metrics.

AUT (Area Under the DET curve): Primary aggregate metric. Lower is better (0 = perfect). livekit-wakeword conv-attention: 0.0012 vs openWakeWord DNN: 0.0720.

FPPH (False Positives Per Hour): How many times the model falsely triggers per hour of non-wake-word audio. livekit-wakeword: 0.08 vs openWakeWord: 8.50.

Recall: True positive rate. livekit-wakeword: 86.1% vs openWakeWord: 68.6%.

Threshold optimization: Scans 0.01–0.99 to maximize recall while keeping FPPH ≤ target_fp_per_hour.

# Evaluate any compatible ONNX model (including openWakeWord models) uv run livekit-wakeword eval configs/hey_livekit.yaml -m /path/to/model.onnxOutputs: DET curve plot (.png) + metrics JSON. Compatible with any ONNX model sharing the (1,16,96) input contract.